In January 2026, South Korea became the first country in the world to formally enforce safety requirements for frontier AI systems. The AI Basic Act, which took effect on January 22, establishes mandatory human oversight for "high-impact" AI applications spanning nuclear safety, drinking water production, transport, healthcare, and financial services like credit evaluation. The majority of its rules governing high-risk AI will be enforced by August 2026.

The law has drawn sharp reactions from both sides. Local tech startups say it goes too far. Civil society groups say it does not go far enough. But for the global legal community, the more pressing question is this: what does South Korea's regulatory framework mean for cross-jurisdictional AI liability, and how will it interact with the EU AI Act, the emerging patchwork of US state laws, and the growing volume of AI litigation worldwide?

A Three-Way Regulatory Comparison

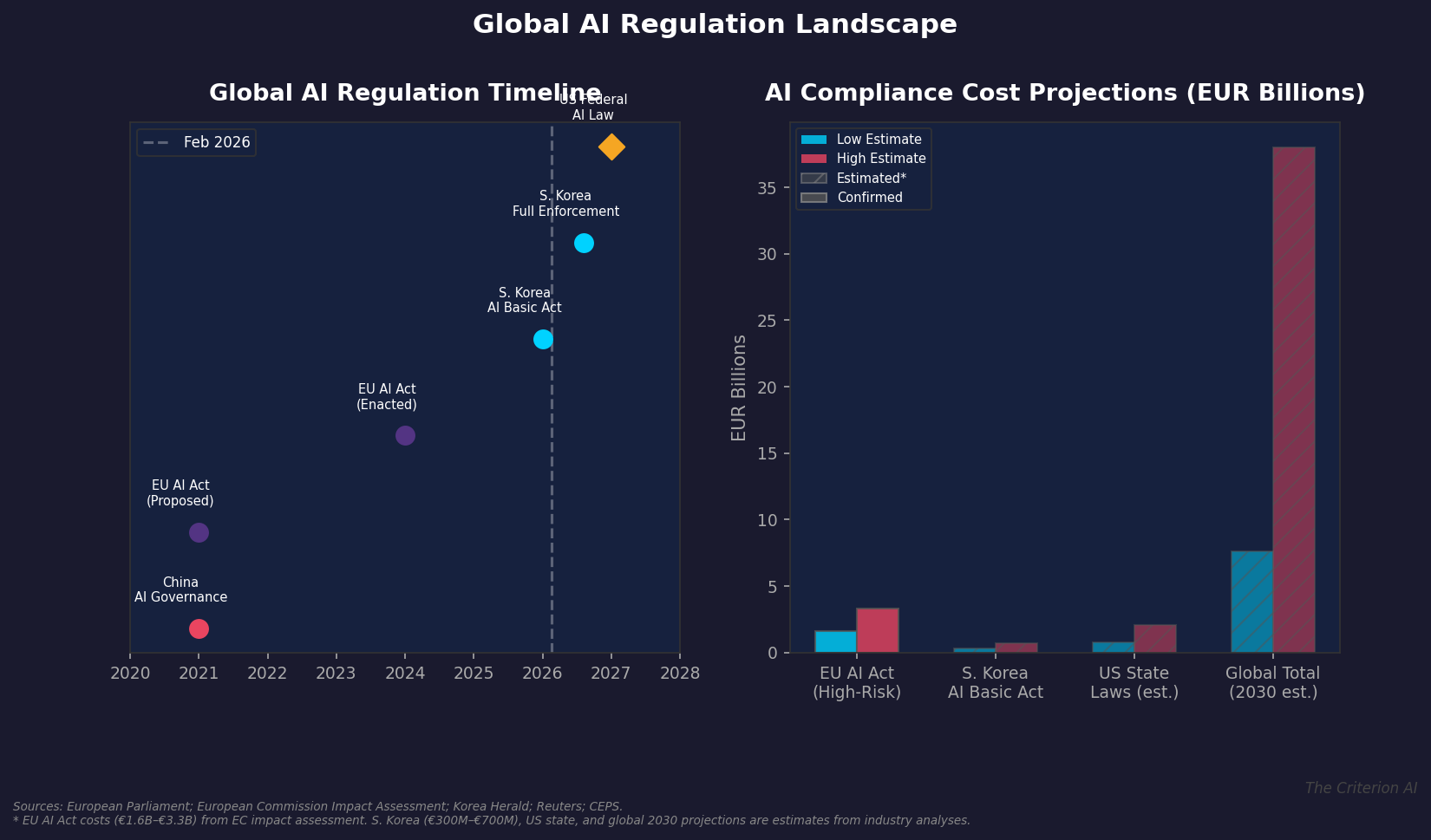

To understand South Korea's significance, it helps to map the global regulatory landscape.

The EU AI Act, proposed in 2021 and enacted in 2024, takes a risk-based approach. It categorizes AI systems into four tiers (unacceptable, high-risk, limited, and minimal risk) and imposes corresponding obligations. Prohibited practices include real-time biometric surveillance and social scoring. High-risk systems face requirements for risk management, data governance, transparency, and human oversight. Full enforcement begins in phases through 2027.

South Korea's AI Basic Act shares the EU's risk-based philosophy but diverges in important ways. It focuses heavily on frontier AI systems, specifically large-scale models with broad capabilities, and requires companies to ensure human oversight in high-impact sectors. It also mandates labeling of AI-generated content. Critically, South Korea moved faster: from legislative passage to enforcement in a matter of months, compared to the EU's multi-year implementation timeline.

The United States has no comprehensive federal AI law. Instead, the regulatory landscape consists of executive orders (the Biden-era AI Executive Order, portions of which were rescinded under the current administration), sector-specific guidance from agencies like the FTC and FDA, and a growing number of state-level laws. Colorado's AI Act, effective in 2026, addresses algorithmic discrimination. Illinois's BIPA has been applied to AI-related biometric data. But there is no unified federal framework.

Sources: EU Parliament, Korea Herald, Reuters, CEPS, Axis Intelligence

The Compliance Cost Reality

Regulation comes with costs, and the numbers are significant. The European Commission's initial impact assessment estimated that compliance with the EU AI Act's high-risk provisions would cost between EUR 1.6 billion and EUR 3.3 billion globally, assuming roughly 10% of AI systems qualify as high-risk. Independent analyses have ranged higher: the Centre for Data Innovation projected costs exceeding EUR 31 billion over five years, though CEPS researchers have disputed that figure as a gross exaggeration of their economic analysis.

Looking forward to 2030, estimates for the total global AI compliance market range from EUR 7.6 billion on the conservative end to EUR 38 billion on the high end, according to analysis published in late 2025. South Korea's compliance costs are still being calculated, but early estimates from local industry groups place them between EUR 300 million and EUR 700 million for domestic companies.

For companies operating globally, the math is straightforward but daunting. A firm deploying high-risk AI in the EU, South Korea, and multiple US states may need to comply with three or more distinct regulatory regimes simultaneously, each with its own definitions of risk, its own transparency requirements, and its own enforcement mechanisms.

Cross-Jurisdictional Liability: The Legal Complexity

This regulatory fragmentation creates significant liability exposure. Consider a scenario that is becoming increasingly common: a US-based AI company deploys a healthcare decision-support tool that is used by hospitals in Seoul, Berlin, and Denver. The tool produces a recommendation that leads to patient harm.

Under the EU AI Act, the company faces obligations related to risk management, data quality, and post-market monitoring for high-risk medical AI. Under South Korea's AI Basic Act, the company must ensure human oversight in healthcare applications. Under Colorado's AI Act, the company may face requirements related to algorithmic impact assessments and anti-discrimination provisions.

The legal questions multiply rapidly. Which jurisdiction's standards apply? Can a company be found negligent under one framework but compliant under another? How do courts in one jurisdiction evaluate the adequacy of safety measures designed to comply with another jurisdiction's rules?

These are precisely the questions that require expert witness testimony. An AI expert can help a court understand:

- How regulatory requirements differ across jurisdictions and whether a company's compliance efforts were reasonable given the applicable frameworks.

- Whether the technical design of an AI system met the state of the art for safety in its application domain.

- How to interpret the outputs of algorithmic impact assessments and whether they satisfy legal standards for due diligence.

What South Korea's Law Signals for Litigation Trends

South Korea's move is significant not just for its substance but for its signaling effect. When the Korea Herald reported that South Korea was "first in the world" to establish frontier AI safety requirements, it reinforced a narrative that comprehensive AI regulation is inevitable. Companies that have been waiting for regulatory clarity before investing in compliance now face pressure from multiple directions.

For litigators, the practical implication is clear: AI-related cases will increasingly involve cross-border regulatory analysis. Attorneys will need expert witnesses who can navigate not just the technical dimensions of AI systems but also the regulatory frameworks of multiple jurisdictions. This is especially true in product liability, intellectual property, and employment discrimination cases where AI systems are deployed across borders.

The Expert Witness Opportunity

The convergence of South Korea's AI Basic Act, the EU AI Act's phased enforcement, and the continued absence of US federal legislation creates a unique moment for AI expert testimony. Courts are going to encounter cases involving regulatory frameworks they have never applied, technical systems they have never evaluated, and liability theories that have no established precedent.

In this environment, expert witnesses serve several critical functions:

- Regulatory translation. Explaining what a foreign AI regulation requires and how it compares to domestic standards.

- Technical bridge-building. Connecting regulatory concepts like "high-risk AI" and "human oversight" to the actual engineering practices of AI development.

- Standard of care analysis. Opining on whether a company's AI safety practices met the applicable standard of care given the regulatory landscape at the time.

- Foreseeability assessment. Evaluating whether risks that materialized were foreseeable given the state of AI safety science and the regulatory signals available to the company.

Looking Ahead

South Korea's AI Basic Act is a benchmark, but it is not the final word. As enforcement begins in earnest through 2026, we will see how the law operates in practice: what compliance looks like, how regulators interpret its provisions, and how companies adapt. The EU AI Act's ongoing rollout will provide additional data points. And in the United States, the question of federal AI legislation remains open, though the current political environment makes comprehensive action unlikely in the near term.

For attorneys and companies operating in this landscape, the message is clear: AI regulation is a global phenomenon, and legal strategy must account for multiple jurisdictions simultaneously. The demand for expert witnesses who can bridge technical, regulatory, and legal domains will only increase as this landscape matures.

The Criterion AI provides expert witness services and litigation support for matters involving artificial intelligence, machine learning, and algorithmic decision-making. For a confidential consultation on an active or anticipated matter, contact us at info@thecriterionai.com or call (617) 798-9715.