On February 15, 2026, Axios broke a story that sent shockwaves through both the defense and AI industries: the Pentagon is threatening to cut off Anthropic, maker of the Claude AI system, over the company's refusal to remove safety guardrails for military applications. The Department of Defense wants AI labs to allow their tools to be used for "all lawful purposes," including weapons development, intelligence collection, and battlefield operations. Anthropic has drawn a line, insisting on restrictions against mass surveillance of Americans and autonomous weapons deployment without human oversight.

This is not just a procurement dispute. It is a legal, ethical, and governance inflection point that will reshape how courts, regulators, and expert witnesses engage with AI for years to come.

The Stakes: Billions in AI Defense Contracts

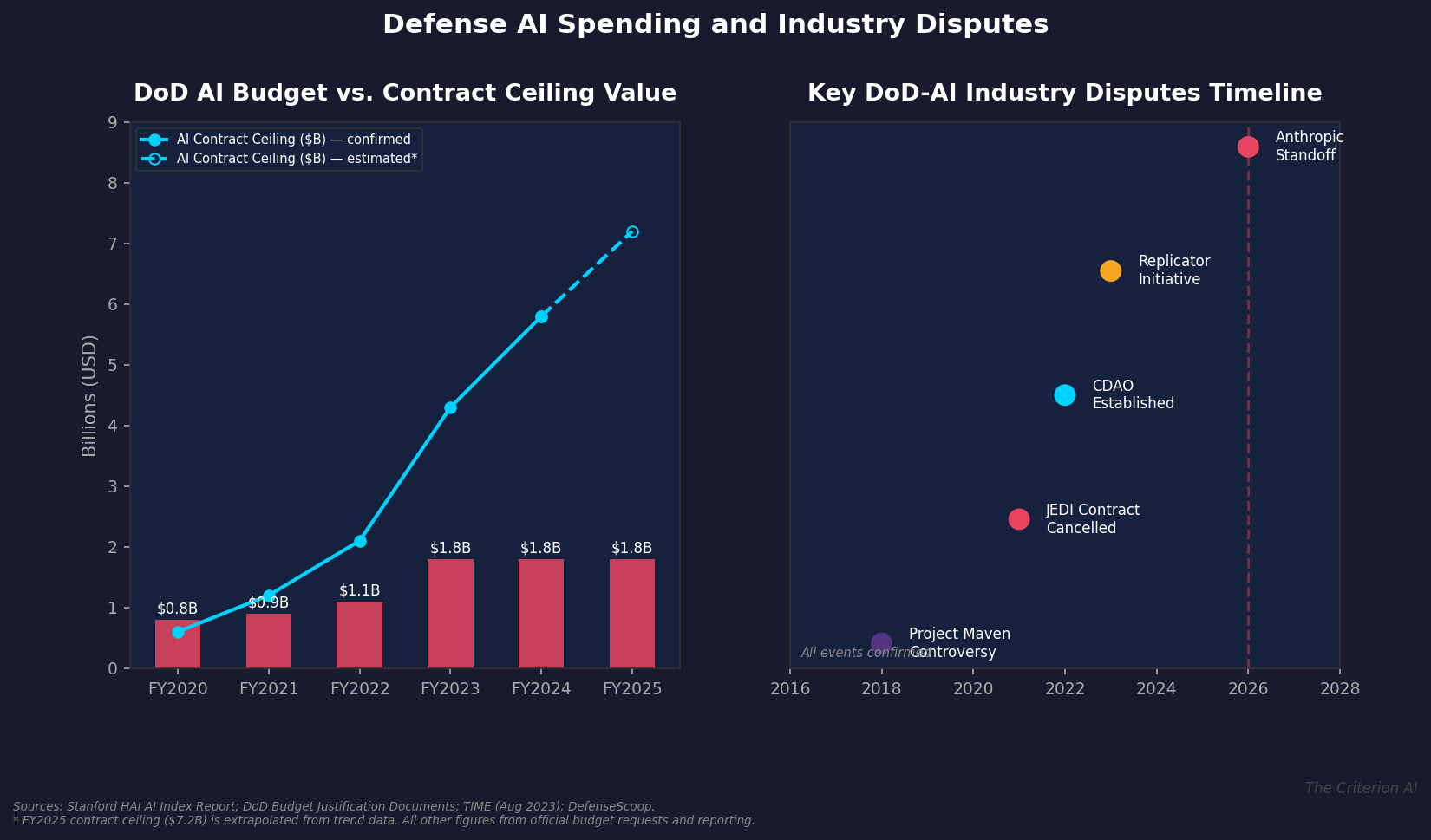

The numbers tell a striking story. The Department of Defense allocated $1.8 billion specifically to artificial intelligence in its FY2025 budget request. But the total ceiling value of AI-related defense contracts has ballooned from roughly $269 million before August 2022 to $4.3 billion by August 2023, according to reporting by TIME magazine drawing on Stanford HAI data. Current estimates for FY2025 put that figure well above $7 billion when extended contract options are included.

Sources: DoD Budget Requests, Stanford HAI, TIME, DefenseScoop

Four major AI labs are in negotiations with the Pentagon. Three of them, reportedly including OpenAI, Google, and xAI, have agreed to remove safeguards for use on unclassified military systems. Anthropic stands alone. And now the Pentagon is reportedly considering labeling Anthropic a "supply chain risk," a designation that could effectively blacklist the company from government work.

The Legal Architecture: FRE 707 and AI Governance Testimony

For legal practitioners, this dispute raises immediate questions about the role of expert witnesses in AI governance litigation. Federal Rule of Evidence 707, which governs the admissibility of polygraph evidence, offers an instructive analogy. Just as courts have grappled with whether automated detection systems meet reliability thresholds, they will increasingly need expert guidance on whether AI safety guardrails constitute reasonable engineering practice or arbitrary commercial restrictions.

Consider the Daubert framework. When the Pentagon argues that Anthropic's safeguards are unnecessary because the military's use cases are "lawful," an expert witness can provide critical testimony on several fronts:

- Technical reasonableness. Are Anthropic's restrictions on autonomous weapons and mass surveillance technically justified? What does the computer science literature say about the failure modes of large language models in high-stakes military contexts?

- Industry standard of care. If three of four major labs have agreed to remove safeguards, does Anthropic's position represent a higher standard of care, or a deviation from industry norms? This question will be central to any future negligence or contract dispute.

- Regulatory foresight. An expert can contextualize this dispute within the broader global regulatory trajectory, from the EU AI Act's prohibitions on certain military AI applications to South Korea's new AI Basic Act.

From Project Maven to the Anthropic Standoff

This is not the first time the Pentagon and Silicon Valley have clashed over AI ethics. In 2018, Google employees revolted over Project Maven, a Pentagon initiative to use AI for drone surveillance image analysis. Google ultimately withdrew from the contract. In 2021, the Pentagon cancelled the $10 billion JEDI cloud computing contract after years of legal challenges from Amazon. In 2022, the Chief Digital and Artificial Intelligence Office (CDAO) was established to centralize AI procurement. In 2023, the Replicator initiative aimed to field autonomous systems at scale.

Each of these moments generated litigation, regulatory scrutiny, and demand for expert testimony. The Anthropic standoff follows this pattern but escalates it significantly. Unlike Project Maven, where the debate was internal to a single company, the current dispute involves the federal government threatening to designate an AI company's safety practices as a national security risk.

Why Expert Witnesses Matter Here

AI governance litigation demands a specific kind of expertise that sits at the intersection of computer science, public policy, and law. Attorneys litigating contract disputes between AI vendors and government agencies need experts who can:

- Translate technical AI safety concepts (alignment, guardrails, red-teaming) into language that judges and juries can evaluate.

- Assess whether a company's safety restrictions were commercially reasonable under the circumstances.

- Opine on the state of the art in AI safety research and whether specific guardrails are supported by peer-reviewed evidence.

- Navigate the evolving patchwork of AI regulations across jurisdictions.

The Anthropic dispute will likely generate at least three categories of litigation. First, contract disputes if the Pentagon follows through on its threat and Anthropic challenges the designation. Second, shareholder litigation if Anthropic's valuation is materially affected by exclusion from defense contracts. Third, regulatory challenges as Congress and federal agencies debate whether AI safety restrictions should be mandated or prohibited in defense contexts.

The Broader Implications for AI Governance

Bloomberg's reporting adds a crucial detail: Anthropic's specific red lines involve preventing Claude from being used for mass surveillance of Americans or for developing weapons deployable without human involvement. These are not abstract philosophical positions. They track closely with principles embedded in the EU AI Act, which prohibits certain AI applications including real-time biometric surveillance, and with the Department of Defense's own Ethical Principles for Artificial Intelligence, adopted in 2020.

The irony is sharp. The Pentagon's own stated ethical framework for AI includes the principle that "AI capabilities will be developed with the requisite safeguards" and that humans will "exercise appropriate levels of judgment." Anthropic's guardrails arguably align more closely with these stated principles than the Pentagon's current negotiating position.

For the legal community, the question is not simply whether Anthropic's safeguards are reasonable. It is whether courts will recognize AI safety practices as a legitimate basis for contract terms, or whether national security imperatives will override them entirely.

Looking Ahead

The Pentagon-Anthropic standoff will not be resolved quickly. It sits at the intersection of executive authority, procurement law, AI safety science, and corporate governance. As this dispute moves through legal and regulatory channels, the demand for qualified expert witnesses who can bridge these domains will only grow.

At The Criterion AI, we track these developments because they define the frontier of AI expert testimony. The questions raised by this dispute, about the legal status of AI guardrails, the standard of care for AI safety, and the role of technical expertise in national security litigation, will shape courtroom practice for the next decade.

The Criterion AI provides expert witness services and litigation support for matters involving artificial intelligence, machine learning, and algorithmic decision-making. For a confidential consultation on an active or anticipated matter, contact us at info@thecriterionai.com or call (617) 798-9715.