On February 13, 2026, the Department of Justice sued Harvard University in federal court in Massachusetts, accusing the school of withholding five years of applicant-level admissions data. The lawsuit alleges Harvard has failed for over 10 months to comply with government requests for documents related to an investigation into whether the university racially discriminates in its admissions process across undergraduate, law, and medical programs.

Four days before the lawsuit was filed, the Department of Defense severed its academic ties with Harvard. The convergence of these actions signals a broader reckoning, not just with Harvard, but with how algorithmic and data-driven decision-making in higher education will be scrutinized in court.

The Data the DOJ Wants, and Why It Matters

The DOJ's request is sweeping. It seeks applicant grades, test scores, essays, extracurricular activities, and other records spanning five years and three schools. Harvard has maintained it is engaging "in good faith" and is "prepared to engage according to the process required by law."

But this case is not simply about one university's compliance with a federal investigation. It is about what happens when institutions that increasingly rely on algorithmic tools for admissions decisions are forced to open those systems to legal scrutiny.

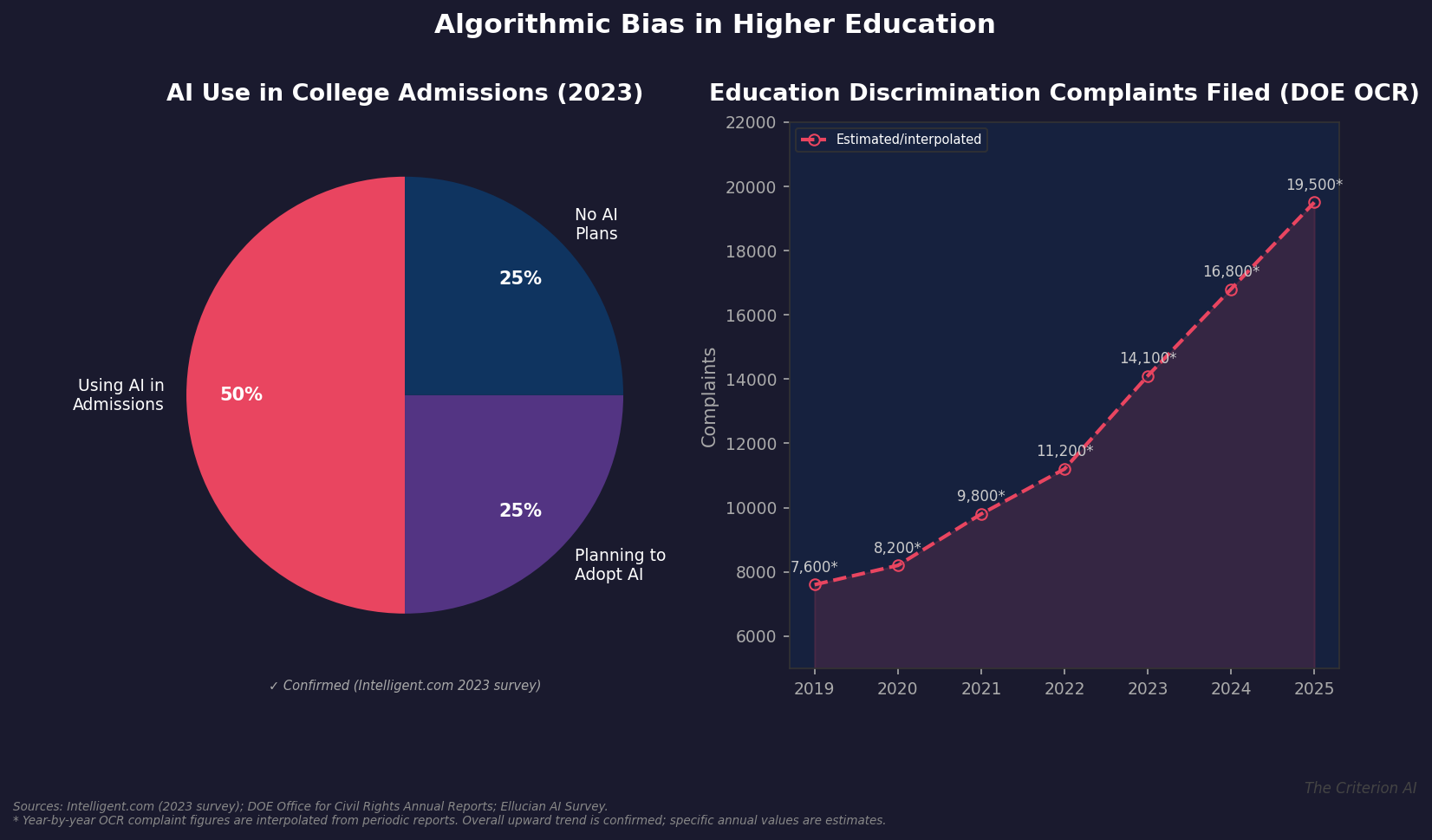

According to a September 2023 survey by Intelligent.com, reported by U.S. News, roughly 50% of higher education admissions offices are already using AI in some capacity. Ellucian's 2024 survey found that AI adoption among higher education professionals surged by 35 percentage points year over year, with 61% using AI in both personal and professional contexts. The trend is accelerating, and the legal infrastructure has not kept pace.

Sources: Intelligent.com (2023), DOE Office for Civil Rights Annual Reports, Ellucian AI Survey

Algorithmic Bias: The Technical Reality

When universities deploy AI-assisted tools in admissions, they introduce a layer of computational decision-making that can embed, amplify, or obscure bias. This is not theoretical. Research has consistently demonstrated that algorithmic systems trained on historical data tend to replicate the biases present in that data.

In the admissions context, this means an AI system trained on a decade of acceptance decisions at an elite university may learn to favor applicants who match historical profiles of admitted students, profiles that reflect the institution's past demographic composition. If that composition was shaped by discriminatory practices, the algorithm carries those patterns forward, often in ways that are difficult to detect without expert analysis.

The technical challenge is compounded by opacity. Many AI-assisted admissions tools use machine learning models that do not produce easily interpretable explanations for their outputs. A human reviewer might articulate why they favored one applicant over another. A neural network ranking system typically cannot, at least not in terms that satisfy legal standards of transparency.

The Expert Witness Gap in Education Discrimination

Education discrimination cases have historically relied on statistical experts who analyze admissions data for patterns of disparate impact or intentional discrimination. The landmark Students for Fair Admissions v. Harvard case, decided by the Supreme Court in 2023, featured extensive statistical testimony about how Harvard's admissions process treated Asian American applicants.

The DOJ's current investigation picks up where that case left off, but in a landscape where AI tools have become far more prevalent. This creates a demand for expert witnesses who possess a dual competency: statistical analysis of admissions outcomes and technical understanding of how algorithmic systems generate those outcomes.

Consider the questions an expert witness might need to address in this litigation:

- Model architecture. What type of AI system does the institution use? Is it a simple scoring model, a complex neural network, or a hybrid system with human override capabilities?

- Training data provenance. What historical data was used to train the system, and does that data reflect periods of documented discriminatory practice?

- Feature importance. Which input variables most heavily influence the model's outputs, and do any of those variables serve as proxies for protected characteristics like race or ethnicity?

- Disparate impact analysis. Controlling for legitimate admissions criteria, does the system produce statistically significant differences in outcomes across racial or ethnic groups?

These questions require expertise that goes beyond traditional statistical analysis. They require someone who can evaluate the technical architecture of an AI system while also understanding the legal frameworks governing discrimination claims.

The Rising Tide of Discrimination Complaints

The broader context matters. Complaints filed with the Department of Education's Office for Civil Rights have been climbing steadily, reflecting increased public attention to equity issues in education and growing willingness to pursue formal complaints. This trend predates the current DOJ lawsuit, but the Harvard case will almost certainly accelerate it.

When a high-profile investigation like this generates national headlines, it has a catalytic effect. Other institutions that use similar tools begin reviewing their own practices. Applicants who were denied admission wonder whether algorithmic systems played a role. Advocacy organizations identify new targets for investigation.

Daubert and the Admissions Algorithm

For any litigation that emerges from the DOJ's investigation, the Daubert standard will be central to how expert testimony about algorithmic bias is received. Under Daubert, expert testimony must be based on sufficient facts, be the product of reliable principles and methods, and reflect a reliable application of those principles to the case at hand.

AI bias analysis meets these criteria when conducted properly. The methodologies for algorithmic auditing are well-established in the computer science literature, with published frameworks for testing disparate impact, analyzing feature importance, and evaluating model fairness across multiple definitions of equity. A qualified expert can demonstrate that these methods have been tested, subjected to peer review, and accepted within the relevant scientific community.

The challenge is finding experts who can translate these technical methodologies into testimony that is both legally admissible and comprehensible to judges and juries. This is the gap that AI expert witnesses fill.

What This Means for Universities and Counsel

The DOJ v. Harvard lawsuit should prompt every university using AI-assisted admissions tools to conduct a proactive audit. Specifically, institutions should:

- Document their AI systems. Maintain clear records of what tools are used, how they were developed, what data they were trained on, and how their outputs inform human decision-makers.

- Conduct regular bias audits. Test their systems for disparate impact across protected characteristics, using methodologies that would withstand Daubert scrutiny.

- Preserve data. Anticipate that admissions data may be subject to discovery in future litigation or government investigation.

- Engage expert consultants early. Do not wait for a lawsuit to bring in technical expertise. Proactive engagement with AI fairness experts can identify and remediate issues before they become legal liabilities.

For attorneys representing applicants, advocacy organizations, or government agencies, the DOJ v. Harvard case provides a template for how to approach algorithmic bias claims. The key is pairing traditional statistical analysis with technical AI expertise to build a comprehensive picture of how admissions decisions are made and whether protected characteristics impermissibly influence outcomes.

The Road Ahead

The DOJ's lawsuit against Harvard is just the beginning. As AI becomes more deeply embedded in educational decision-making, the legal questions will multiply. Who is liable when an algorithm discriminates? What level of transparency should institutions be required to provide about their AI systems? How should courts evaluate the reliability of algorithmic bias analysis?

These are the questions that will define the next generation of education discrimination litigation. And they are questions that cannot be answered without expert witnesses who understand both the technology and the law.

The Criterion AI provides expert witness services and litigation support for matters involving artificial intelligence, machine learning, and algorithmic decision-making. For a confidential consultation on an active or anticipated matter, contact us at info@thecriterionai.com or call (617) 798-9715.